Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More

Four months after it was initially shown off to the public, OpenAI is finally bringing its new humanlike conversational voice interface for ChatGPT — “ChatGPT Advanced Voice Mode” to users beyond its initial small testing group and waitlist.

All paying subscribers to OpenAI’s ChatGPT Plus and Team plans will get access to the new ChatGPT Advanced Voice Mode, though the access is rolling out gradually over the next several days, according to OpenAI. It will be available in the U.S. to start.

Next week, the company plans to make ChatGPT Advanced Voice Mode available to subscribers of its Edu and Enterprise plans.

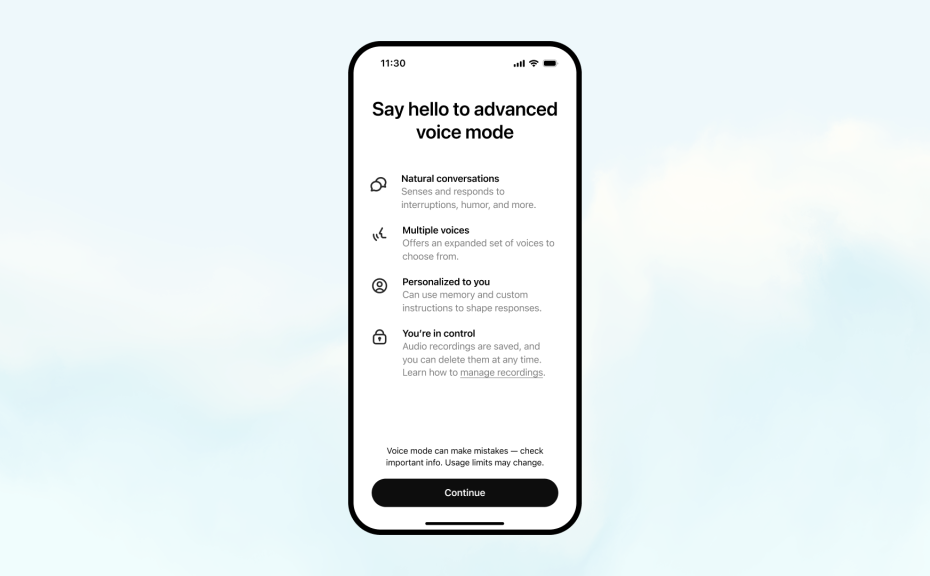

In addition, OpenAI is adding the ability to store “custom instructions” for the voice assistant and “memory” of the behaviors the user wants it to exhibit, similar to features rolled out earlier this year for the text version of ChatGPT.

And it’s shipping five new, different-styled voices today, too: Arbor, Maple, Sol, Spruce, and Vale — joining the previous four available, Breeze, Juniper, Cove, and Ember, which users could talk to using ChatGPT’s older, less advanced voice mode.

This means ChatGPT users, individuals for Plus and small enterprise teams for Teams, can use the chatbot by speaking to it instead of typing a prompt. Users will know they’ve entered Advanced Voice Assistant via a popup when they access voice mode on the app.

“Since the alpha, we’ve used learnings to improve accents in ChatGPT’s most popular foreign languages, as well as overall conversational speed and smoothness,” the company said. “You’ll also notice a new design for Advanced Voice Mode with an animated blue sphere.”

Originally, voice mode had four voices (Breeze, Juniper, Cove and Ember) but the new update will bring five new voices called Arbor, Maple, Sol, Spruce and Vale. OpenAI did not provide a voice sample for the new voices.

These updates are only available on the GPT-4o model, not the recently released preview model, o1. ChatGPT users can also utilize custom instructions and memory to ensure voice mode is personalized and responds based on their preferences for all conversations.

AI voice chat race

Ever since the rise of AI voice assistants like Apple’s Siri and Amazon’s Alexa, developers have wanted to make the generative AI chat experience more humanlike.

ChatGPT has had voices built into it even before the launch of voice mode, with its Read-Aloud function. However, the idea of Advanced Voice Mode is to give users a more human-like conversation experience, a concept other AI developers want to emulate as well.

Hume AI, a startup by former Google Deepminder Alan Cowen, released the second version of its Empathic Voice Interface, a humanlike voice assistant that senses emotion based on the pattern of someone’s voice and can be used by developers through a proprietary API.

French AI company Kyutai released Moshi, an open source AI voice assistant, in July.

Google also added voices to its Gemini chatbot through Gemini Live, as it aimed to catchup to OpenAI. Reuters reported that Meta is also developing voices that sound like popular actors to add to its Meta AI platform.

OpenAI says it is making AI voices widely available to more users across its platforms, bringing the technology to the hands of so many more people than those other firms.

Comes following delays and controversy

However, the idea of AI voices conversing in real-time and responding with the appropriate emotion hasn’t always been received well.

OpenAI’s foray into adding voices into ChatGPT has been controversial at the onset. In its May event announcing GPT-4o and the voice mode, people noticed similarities of one of the voices, Sky, to that of the actress Scarlett Johanssen.

It didn’t help that OpenAI CEO Sam Altman posted the word “her” on social media, a reference to the movie where Johansson voiced an AI assistant. The controversy sparked concerns around AI developers mimicking voices of well-known individuals.

The company denied it referenced Johansson and insisted that it did not intend to hire actors whose voices sound similar to others.

The company said users are limited only to the nine voices from OpenAI. It also said that it evaluated its safety before release.

“We tested the model’s voice capabilities with external red teamers, who collectively speak a total of 45 different languages, and represent 29 different geographies,” the company said in an announcement to reporters.

However, it delayed the launch of ChatGPT Advanced Voice Mode from its initial planned rollout date of late June to “late July or early August,” and only then to a group of OpenAI-selected initial users such as University of Pennsylvania Wharton School of Business professor Ethan Mollick, citing the need to continue safety testing or “read teaming” the voice mode to avoid its use in potential fraud and wrongdoing.

Clearly, the company thinks it has done enough to release the mode more broadly now — and it is in keeping with OpenAI’s generally more cautious approach of late, working hand-in-hand with the U.S. and U.K. governments and allowing them to preview new models such as its o1 series prior to launch.